The following guide explains the emerging threat of Deepfakes and Identity Theft.

As the advancement in technology continues at an unprecedented pace, our reliance on the digital world becomes increasingly prevalent. Nevertheless, this digital evolution comes with its set of risks.

A worrying trend of paramount importance is the escalating problem of identity theft linked with deepfake technology.

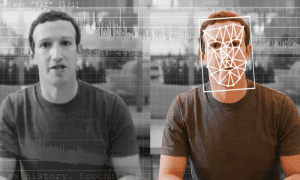

Deepfakes, a term combining “deep learning” and “fake”, refer to the use of sophisticated artificial intelligence (AI) algorithms to create synthetic media, replicating individuals’ physical attributes and behaviors with uncanny accuracy.

Originally, deepfakes were seen as a novelty, used primarily for entertainment purposes.

However, their potential misuse in committing fraud and identity theft has made them a concern for celebrities, common individuals, businesses, and law enforcement.

Identity theft is a long-standing issue that continues to evolve alongside technological advancements. The introduction of deepfake technology into the mix intensifies this concern.

As digital forensics experts, we need to understand the possible scenarios where deepfakes can be used for identity theft and be prepared to mitigate the associated risks.

Deepfakes in Identity Theft and Fraud

Deepfakes’ capacity to mimic people’s voices, physical appearances, and mannerisms opens a plethora of opportunities for fraudsters.

Here are some ways deepfakes are being used to commit fraud:

Impersonation Scams

Cybercriminals are employing deepfakes to impersonate trusted individuals or high-ranking executives, tricking employees into transferring funds or revealing sensitive information.

These scams, also known as CEO fraud or Business Email Compromise (BEC), have been made more convincing with deepfake technology.

Recently using deepfake audio has become a popular method of impersonation scams.

Social Engineering Attacks

Fraudsters can use deepfakes to create convincing, personalized messages, fooling victims into divulging personal data or performing actions that compromise their security.

Deepfake-based Phishing

Deepfakes can enhance traditional phishing techniques communications seem more authentic, thereby increasing their effectiveness.

False Evidence and Discrediting

Deepfakes can be used to create false video or audio evidence, potentially causing personal harm or legal issues. They can also be used to discredit individuals, corporations, or political entities.

Misinformation and Propaganda

Deepfakes can amplify the spread of misinformation and propaganda, posing threats to personal and national security.

As deepfakes continue to advance and become more widespread, the potential for misuse in identity theft and fraud becomes a progressively significant threat.

We must remain vigilant and take proactive steps to protect ourselves from this emerging danger.

Preventive Measures Against Deepfake Fraud

Mitigating the risk of deepfake-related identity theft involves a combination of awareness, technology, and policy. Here are some suggested strategies:

Education and Awareness

Make sure that you and your team are aware of the existence of deepfakes and the potential risks associated with them. Regular training sessions can keep everyone informed about the latest threats and safety practices.

Verification Measures

Encourage double-checking and confirmation for sensitive transactions or actions. For instance, if an unexpected email requests a money transfer, follow-up via a separate, verified communication channel.

Advanced Security Systems

Implement security systems that can identify deepfakes. There is ongoing research and development into technologies that can spot synthetic media, including blockchain-based solutions and AI algorithms that can detect inconsistencies in deepfake content.

Establish Clear Procedures

Have procedures in place for reporting and dealing with suspected deepfake attacks. A swift response can limit the damage caused by a fraudulent act.

Strong Legal and Regulatory Frameworks

Lobby for laws and regulations that address the misuse of deepfakes. As a society, we need to push for legislation that balances the opportunities provided by AI technologies with the need to protect individuals and organizations from harm.

Deepfakes and Identity Theft – Final Thoughts

The threats posed by deepfakes are significant, and their potential misuse in identity theft is a pressing issue.

As we continue to integrate technology into every aspect of our lives, it is essential to remain informed about the potential risks and proactive in our defenses.

As a society, we must balance the promise of technology with the need to ensure safety and protect individuals from fraudulent activity.

Deepfakes and Identity Theft FAQs

What are deepfakes?

Deepfakes are synthetic media created by using artificial intelligence to mimic individuals’ physical appearances, voices, and behaviors with high accuracy.

How are deepfakes used in identity theft?

Deepfakes can be used in numerous ways for identity theft, including impersonation scams, social engineering attacks, deepfake-based phishing, creating false evidence, and spreading misinformation.

How can I protect myself from deepfake fraud?

You can protect yourself by raising awareness, implementing verification measures for sensitive transactions, using advanced security systems, having clear procedures for handling suspected deepfake attacks, and advocating for robust legal and regulatory frameworks.

Can technology detect deepfakes?

Yes, there are emerging technologies, including AI-based systems and blockchain solutions, that can help in detecting deepfakes. However, as deepfake technology improves, the challenge of detection also increases.

What are some common examples of deepfake fraud?

Some common examples include scams where fraudsters impersonate executives to trick employees into transferring funds, deepfake-enhanced phishing attacks, and the use of deepfakes to create false evidence or spread misinformation.